Demystifying AI: DIY vs Tech Guru

On this page, you can access the slides and notes from Cegos's recent webinar Demystifying AI: DIY vs Tech Guru, presented in association with the Talent & Leadership Club.

AI doesn’t have to be mysterious - or reserved for tech gurus. In this fast-paced session, discover what today’s AI models do well (and where they fall short), learn practical tools you can use, and explore when DIY is enough - and when expert insight from Cegos Group makes the difference.

In the session, we will establish the base principles of enhancing productivity with AI: what the available models do well, where they lag behind, and how to put them at your fingertips (even if you're not the most techy). We will cover a broad variety of practical applications you can use straight away. We will also share select insights from Cegos Group’s recent White Papers and consider where deploying tech gurus might be most beneficial.

Presented by Ross Hardy

Ross Hardy is Associate Director of IT at Cegos UK, an international leader in learning and development. Ross is an early adopter of AI who has integrated it deeply into his tech stack, using it to increase his team’s productivity and expand what he can build.Notes

1. Opening: The Hype vs. The Reality (8 min)

- The productivity paradox: research shows real gains, but time saved is often consumed by verification, debugging, and adapting outputs to business processes

- The "brilliant but unreliable intern" framing — introduce it here as the lens for the whole session (Jamie Bartlett, How to Talk to AI)

- A brief, honest map of where AI sits today: genuinely transformative in some areas, genuinely overhyped in others

2. What LLMs Actually Are—and Aren't (10 min)

- Creativity vs. Knowledge: LLMs excel at making unexpected semantic connections, not at storing and accurately regurgitating facts

- They are pattern-completion engines, not search engines or databases — a crucial distinction most users never grasp

- The knowledge cutoff and the confidence problem: models don't know what they don't know, and they'll fill gaps with plausible-sounding fiction

- Hallucinations and error rates: not a bug that will be fixed — a structural feature of how these systems work

3. Practical Applications: Where to Actually Use This (18 min)

High-impact use cases:

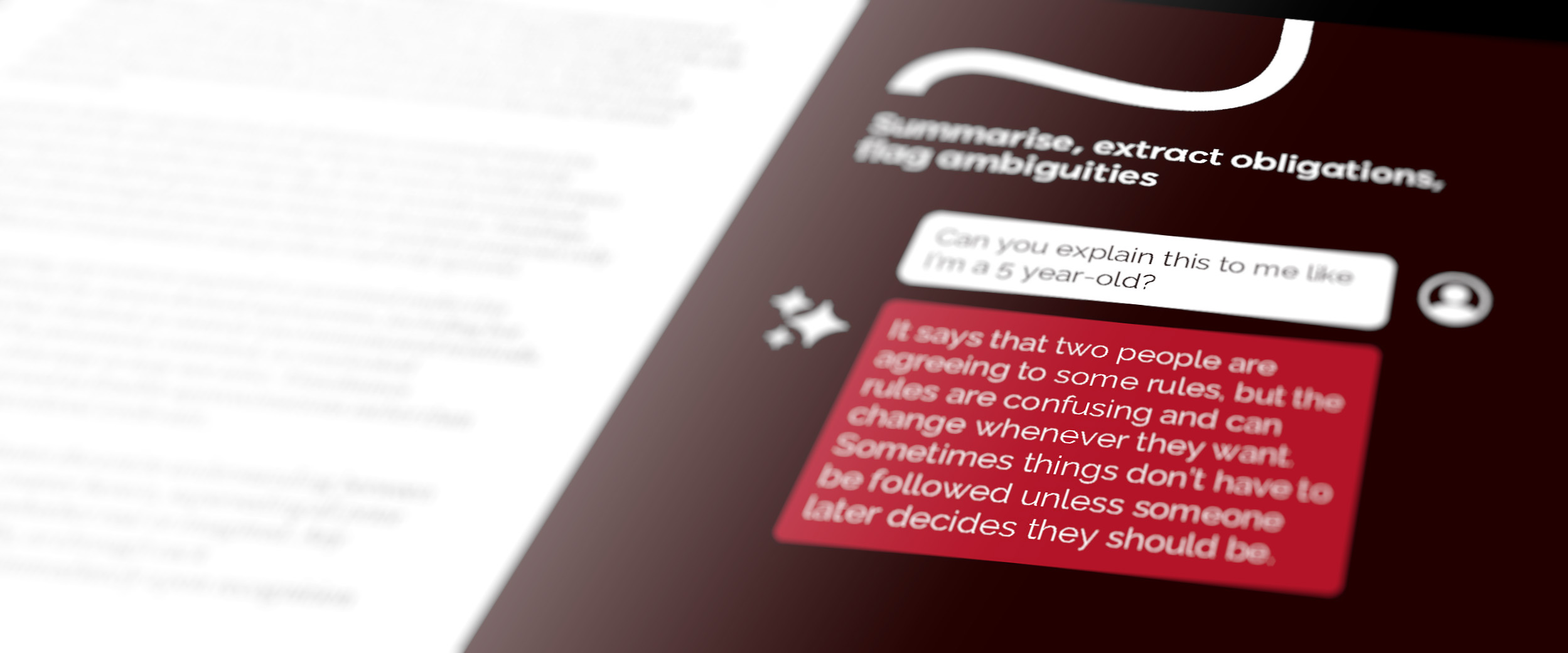

- Parsing legalese, contracts, and long documents — summarise, extract obligations, flag ambiguities

- Drafting and rewriting communications — emails, reports, policy documents: AI as a first-draft engine, human as editor

- Code assistance and scripting — even for non-developers: automating repetitive Excel/Power Automate tasks, writing SQL queries, generating regex, basic Python scripts for data wrangling

- Meeting notes and synthesis — feeding transcripts or rough notes into an LLM to produce structured summaries and action items

- Research triage — rapidly surveying an unfamiliar topic to know what questions to ask, not to get final answers

- Internal knowledge base queries — using AI interfaces on top of your own documentation (with appropriate tooling)

4. Getting Better Outputs: Prompt Engineering That Actually Works (12 min)

- Roleplay and persona prompting — assigning a role unlocks out-of-the-box thinking and shapes tone and framing

- Think out loud (chain-of-thought) — instructing the model to reason step-by-step before answering dramatically reduces errors

- Asking for confidence scores and caveats — explicitly prompting the model to flag uncertainty

- Giving context, not just commands — the more the model knows about your situation, constraints, and audience, the less you have to fix

- Iterative refinement — treating a prompt as a first draft, not a one-shot request; the conversation is the tool

- Negative prompting — telling the model what not to do is often as important as telling it what to do

5. The Risks You Actually Need to Manage (8 min)

- Deskilling — over-reliance degrades the human expertise needed to catch AI errors; the intern analogy bites back

- Data privacy and confidentiality — what you should never put into a public LLM (client data, IP, regulated information); brief mention of enterprise/private deployment options

- The verification burden — every output needs a human owner; AI shifts work, it doesn't eliminate accountability

- Bias and blind spots — models reflect their training data; outputs on sensitive topics need extra scrutiny

- The audit trail problem — AI-assisted decisions are harder to document and defend; worth flagging for compliance-conscious IT environments

6. Our Expertise (4 min)

- Where Cegos Group's white paper insights are most relevant: large-scale deployment, change management, risk frameworks

- Our workshop micro apps use LLMs to help participants surface insights, spot connections, and make sense of their own experiences in real time

Our AI White Papers

Unlocking the power of Generative AI

How can companies adopt AI in a way that is both effective and ethically grounded, while empowering their people? This white paper from Cegos Group, Getting to Grips with AI, offers practical guidance for HR and Learning professionals, outlining how to integrate AI responsibly and develop the human capabilities essential for its successful use.

Impacts of generative AI on learning

How can organisations leverage GenAI to align skills development with strategic goals? Cegos Group’s white paper, Impacts of Generative AI on Learning & Development, examines how AI can enhance training outcomes, streamline learning processes, and support organisational growth.